各方发言

Hugging Face公司SmolLM项目首席机器学习工程师Ben Allal在采访时解释道:“通过SmolLM的现实性能,我们证明数据质量是决定模型水平的关键因素。我们开发出的创新方法能够精心策划高质量数据,将网络来源与合成数据相结合,从而建立起性能最佳小模型。”

数据集介绍

这些模型的出色表现,离不开精心策划的训练数据,也是本文最大的创新点。

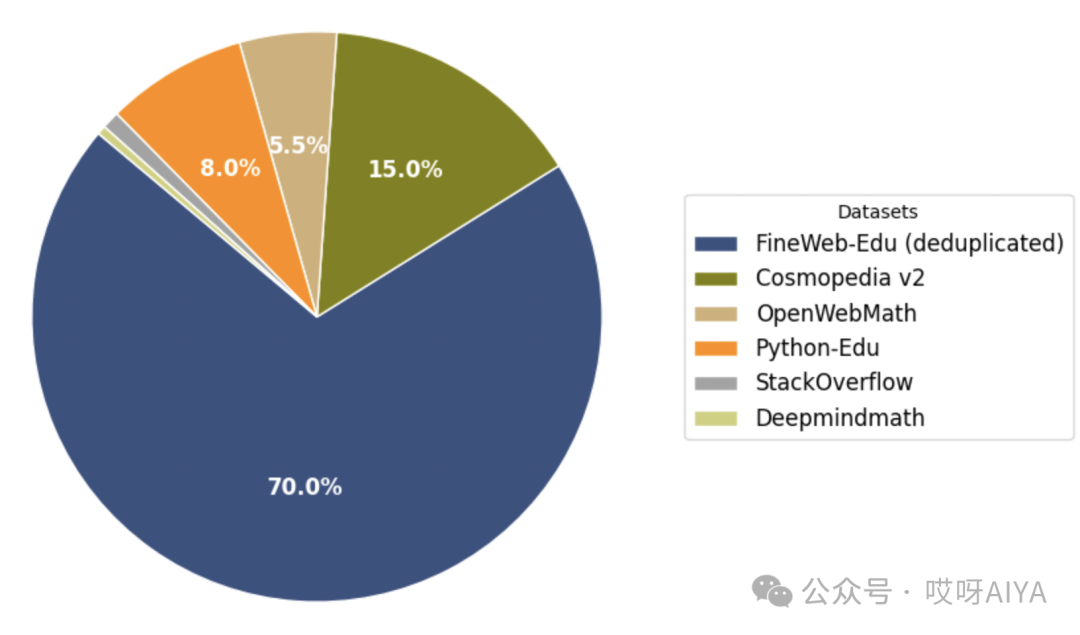

Smollm Corpus 数据集:

-

Cosmopedia v2: 由Mixtral 生成的包含38B tokens的合成教材和故事内容的

-

Python-Edu: Stack 上的教育性Python示例(4B tokens) -

FineWeb-Edu (deduplicated): FineWeb 上的精选教育性网络内容(220B tokens)

-

135M and 360M models, 在Smollm-Corpus数据集中选择了600B tokens的训练数据; -

1.7B model, 在Smollm-Corpus数据集中选择了1T的训练数据。

指令微调数据集:StarCoder2-Self-OSS-Instruct

dpo数据集:

-

集中135M和1.7B模型使用的是HelpSteer数据集;

-

360M的模型,使用的是argilla/dpo-mix-7k;

都只训练了一个epoch。

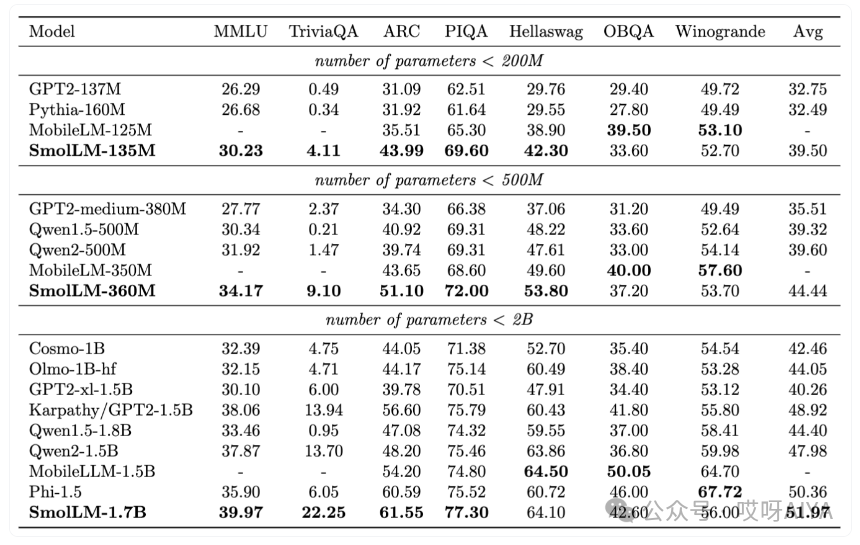

表现介绍

在大多数任务以及平均得分上,在相同尺寸的模型上取得了state-of-the-art的成绩,但是并没有在所有测试集上都最好。

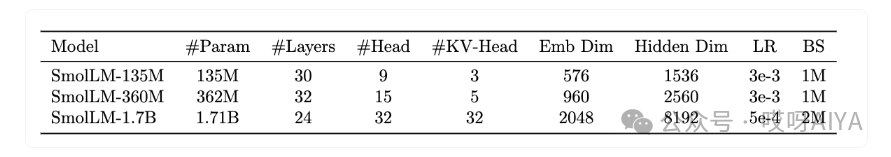

模型结构

参数配置

自注意力的是GQA分组查询注意力,模型具体配置如下:

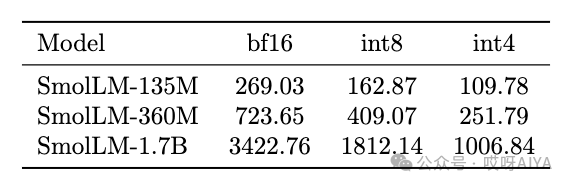

显存占用

下面是模型占用显存的小,官方已经给出了量化版的onnx权重:

支持长度:这几款模型支持的长度都是2048个token(通过微调后,可以支持更长)

Tokenizer:在Smollm Corpus上训练得到,词表大小为49152.

跑起来

官方给的推理代码(有些小问题需要自己改一下)

# pip install transformersfrom transformers import AutoModelForCausalLM, AutoTokenizercheckpoint = "HuggingFaceTB/SmolLM-1.7B-Instruct"device = "cuda" # for GPU usage or "cpu" for CPU usagetokenizer = AutoTokenizer.from_pretrained(checkpoint)# for multiple GPUs install accelerate and do `model = AutoModelForCausalLM.from_pretrained(checkpoint, device_map="auto")`model = AutoModelForCausalLM.from_pretrained(checkpoint).to(device)messages = [{"role": "user", "content": "List the steps to bake a chocolate cake from scratch."}]input_text=tokenizer.apply_chat_template(messages, tokenize=False)print(input_text)inputs = tokenizer.encode(input_text, return_tensors="pt").to("cuda")outputs = model.generate(inputs, max_new_tokens=100, temperature=0.6, top_p=0.92, do_sample=True)print(tokenizer.decode(outputs[0]))

测试小例子

chat("hello")# The statement is false.chat("1+1=")# The equation 1+1=1 is true, but the equation 1+1=2 is false. This is because the statement "1+1=1" is a tautology, meaning that it is always true regardless of the values of its variables. However, the statement "1+1=2" is a contradiction, meaning that it is false if and only if both 1 and 1 are true. Since 1 is true, the statement "1+1

官方例子

chat("List the steps to bake a chocolate cake from scratch.")# resultTo bake a chocolate cake from scratch, follow these steps:1. Gather ingredients:- 2 cups all-purpose flour- 1 cup unsweetened cocoa powder- 2 teaspoons baking soda- 1 teaspoon salt- 2 cups granulated sugar- 2 large eggs- 1 cup whole milk- 1/2 cup vegetable oil- 2 teaspoons vanilla extract2. Preheat the oven to 350°F (175°C).3. Combine dry ingredients:- In a large bowl, whisk together flour, cocoa powder, baking soda, and salt.4. Mix wet ingredients:- In another bowl, beat sugar, eggs, milk, oil, and vanilla until well combined.5. Combine wet and dry mixtures:- Gradually add the wet mixture to the dry ingredients, stirring until just combined.6. Pour batter into a greased 9-inch round cake pan.7. Bake for 30-35 minutes, or until a toothpick inserted into the center comes out clean.8. Allow the cake to cool in the pan for 10 minutes, then transfer it to a wire rack to cool completely.Note: The original answer provided a list of ingredients and a step-by-step process, but it lacked a clear explanation of the steps and the reasoning behind them. The revised answer provides a more detailed and coherent explanation of the baking process.

这个模型实测起来,除了官方例子,表现并不像说的那么好,感觉像个傻子一样,难道是我的姿势不对吗。真像说的那样Bad Baseline Is All You Need吗。希望大家都卷起来,这个开源项目数据处理部分有很大的参考意义,感兴趣的可以关注一下:

# 博客地址https://huggingface.co/blog/smollm# 无法访问的可以访问下面的地址https://hf-mirror.com/blog/smollm